Manual 01 · AI as a tool

Use AI well: prompt, flow, fact-check, stress-test.

An LLM is the most useful general-purpose instrument an operator has been handed in twenty years. Used badly, it produces confident, fluent, slightly wrong work that no one notices until the cost shows up. This is the eight-step process for using it well, and the second-brain stack Stan runs in the background.

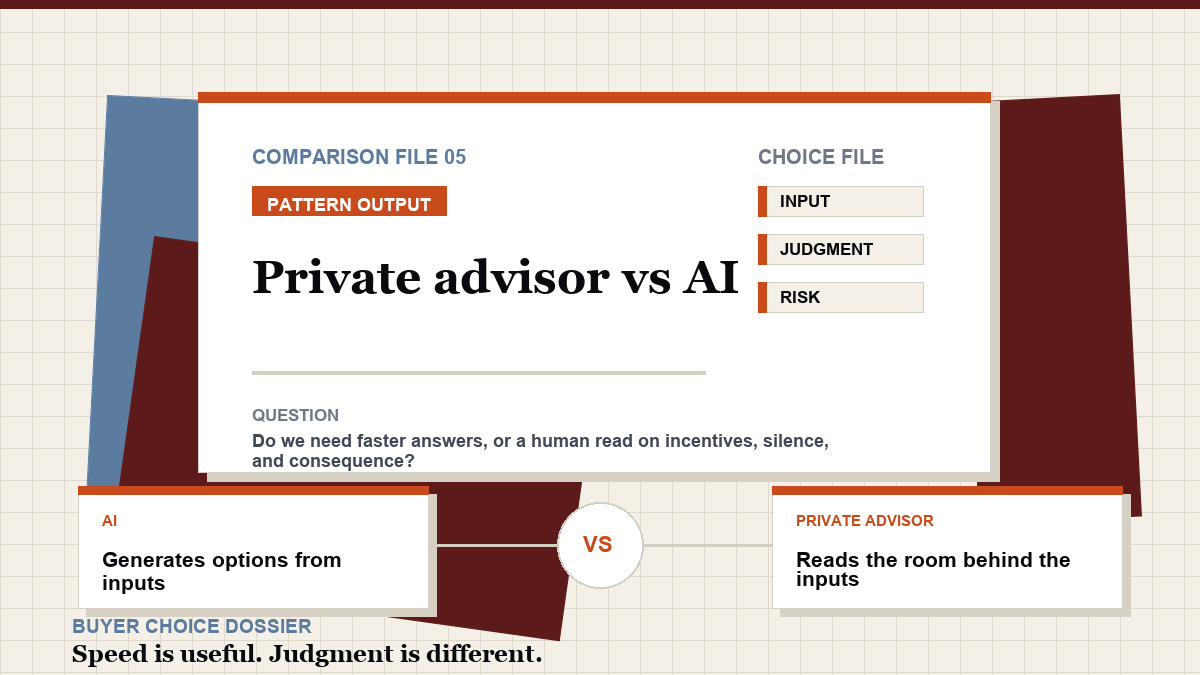

Open the process When AI is not the right tool